Every year in the Premier League, around November (though increasingly it seems earlier), the press starts to talk about the 'sack race'. In this time, it is not uncommon to see headlines like 'X is one bad result from the sack' or 'Loss in game against Y could spell end for Z'. The focus on results has always seemed slightly odd to me. What if manager X inspires a great performance only to lose against the run of play? Should he be sacked? Conversely, if the team puts out the worst performance of the season but manage to scrape a point (or three), should that really influence your decision to sack the current boss? It is this type of thinking, of putting results ahead of performances, that results in Paul Lambert being given a new contract only to be replaced by Sherwood later in the same season. It is also the kind of thinking that analytics can help you to avoid. Given the huge financial implications of the Premier League, it is no wonder that clubs should find this sort of thing attractive.

Every year in the Premier League, around November (though increasingly it seems earlier), the press starts to talk about the 'sack race'. In this time, it is not uncommon to see headlines like 'X is one bad result from the sack' or 'Loss in game against Y could spell end for Z'. The focus on results has always seemed slightly odd to me. What if manager X inspires a great performance only to lose against the run of play? Should he be sacked? Conversely, if the team puts out the worst performance of the season but manage to scrape a point (or three), should that really influence your decision to sack the current boss? It is this type of thinking, of putting results ahead of performances, that results in Paul Lambert being given a new contract only to be replaced by Sherwood later in the same season. It is also the kind of thinking that analytics can help you to avoid. Given the huge financial implications of the Premier League, it is no wonder that clubs should find this sort of thing attractive.

Blame it on the TSR? Blame it on the SoTR? Blame it on the PDO...

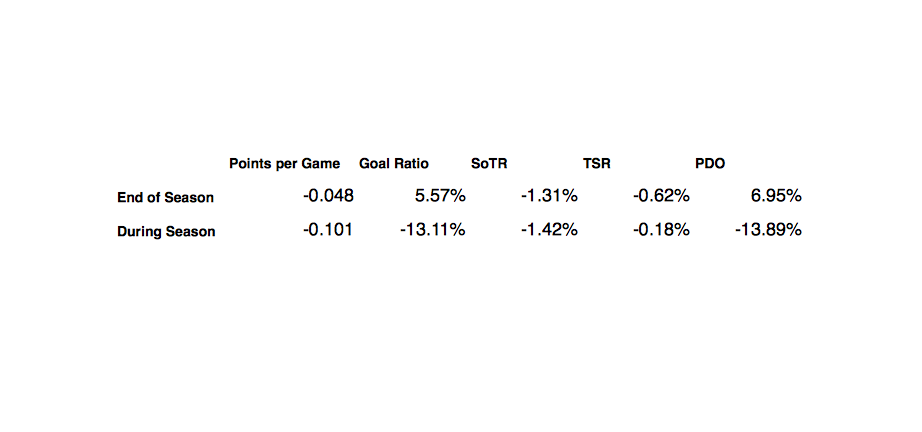

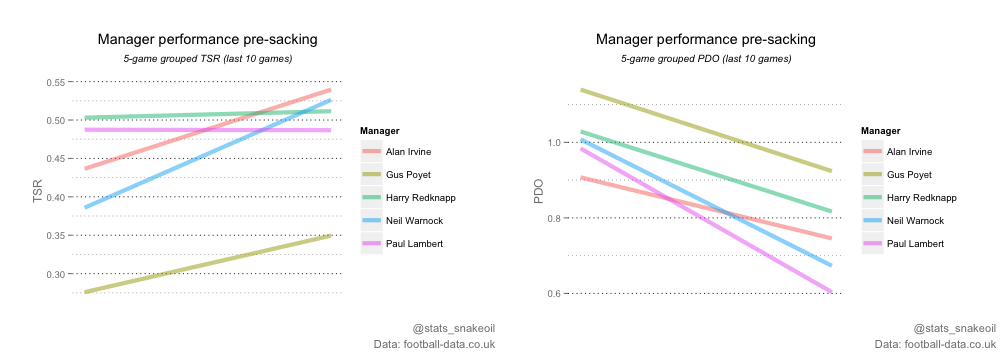

So, how much do highly variable measures of performance influence the sack race versus the more stable underlying numbers? The first distinction we can make is between sackings that take place during the season, and those that occur towards the end and in the off-season. Intuitively, it seems like decision makers, under less pressure in the close-season, ought to be less likely to pull the trigger in response to a brief downturn in results. So does the data back this up? The following table shows the change in some of the basic performance metrics between the penultimate 5 games (10th-6th games before sacking) and final 5 games (5th-1st game before sacking) of a managers tenure, split between managers towards the end of the season and in the off-season and those sacked during the season (2009/10 to 2014/15): (For a slightly lengthier definitions of the metrics here, see the StatsBomb definitions page, but in short, Shots on Target Ratio (SoTR) and Total Shots Ratio (TSR) are very stable in the Premier League. As a result, these are two of the key underlying metrics to evaluate teams' 'true' performance level. On the other hand, PDO, the sum of a team's on target conversion and save percentage, is very volatile and much less repeatable. For this reason, PDO is often described as a proxy for 'luck'.) The first thing we can see is that for managers replaced at the end of the season, there is a much weaker association with a recent downturn in points gained versus managers sacked mid-season. This suggests that there is a difference in the statistical profile of performances immediately preceding sackings depending on when in the season they occur. While I'm naturally wary to infer too much causation from this, it seems reasonable to say that this could be due to post-season managerial changes being part of a longer term plan than those in-season. One example of this would be Tony Pulis's departure from Stoke City after being unable to evolve his team's style and subsequent replacement by someone who could, in fact, do it on a warm Sunday night in Catalonia.

(For a slightly lengthier definitions of the metrics here, see the StatsBomb definitions page, but in short, Shots on Target Ratio (SoTR) and Total Shots Ratio (TSR) are very stable in the Premier League. As a result, these are two of the key underlying metrics to evaluate teams' 'true' performance level. On the other hand, PDO, the sum of a team's on target conversion and save percentage, is very volatile and much less repeatable. For this reason, PDO is often described as a proxy for 'luck'.) The first thing we can see is that for managers replaced at the end of the season, there is a much weaker association with a recent downturn in points gained versus managers sacked mid-season. This suggests that there is a difference in the statistical profile of performances immediately preceding sackings depending on when in the season they occur. While I'm naturally wary to infer too much causation from this, it seems reasonable to say that this could be due to post-season managerial changes being part of a longer term plan than those in-season. One example of this would be Tony Pulis's departure from Stoke City after being unable to evolve his team's style and subsequent replacement by someone who could, in fact, do it on a warm Sunday night in Catalonia.

What about position in the table?

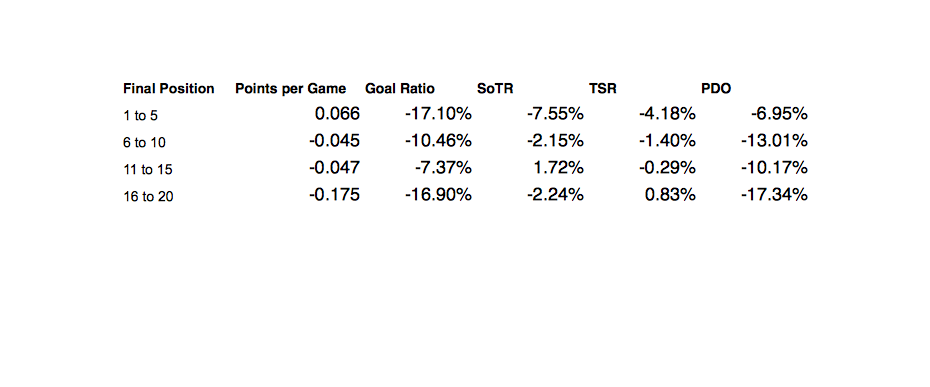

So, can we break up this group a bit more and see if a team's position in the table affects the statistical profile? The following table shoes the same metrics for teams split up by the team's final position in the Premier League table. It's also worth noting that the sample sizes are naturally smaller for the teams that ended up finishing in the top half (the top row for instance only includes Mark Hughes and Roberto Di Matteo at Man City and Chelsea, respectively) .  Interestingly, the broad strokes for the other three groups remains pretty similar: a drop in points yield driven largely by a swing in conversion. Ultimately, I believe this is an area where analytics can instantly help clubs make better decisions. We have only used basic measures of performance here, and without delving into more advanced metrics like expected goals, we can see patterns in manager departures. Of course, this means nothing if this insight isn't actionable; no-one wants a doctor who can diagnose but not treat sickness. I would suggest that the evidence here supports the view that the importance of results is overestimated compared to actual performance. Unfortunately for Alan Shearer, the table does lie ('almost always' according to Rasmus Ankersen) and clubs could improve their firing practices by focusing on underlying performances and progress rather than just results. Statistical models, though not the only option, are useful in this respect to gain an alternative perspective, and hopefully one that won't mistake a scrappy 1-0 win for "grit" or "spirit". All they need to do then is make sure they hire the right guy. Easy, right?...

Interestingly, the broad strokes for the other three groups remains pretty similar: a drop in points yield driven largely by a swing in conversion. Ultimately, I believe this is an area where analytics can instantly help clubs make better decisions. We have only used basic measures of performance here, and without delving into more advanced metrics like expected goals, we can see patterns in manager departures. Of course, this means nothing if this insight isn't actionable; no-one wants a doctor who can diagnose but not treat sickness. I would suggest that the evidence here supports the view that the importance of results is overestimated compared to actual performance. Unfortunately for Alan Shearer, the table does lie ('almost always' according to Rasmus Ankersen) and clubs could improve their firing practices by focusing on underlying performances and progress rather than just results. Statistical models, though not the only option, are useful in this respect to gain an alternative perspective, and hopefully one that won't mistake a scrappy 1-0 win for "grit" or "spirit". All they need to do then is make sure they hire the right guy. Easy, right?...  Follow me on Twitter:

Follow me on Twitter:

@stats_snakeoil